With all the buzz around ChatGPT, naturally, there has been much conversation (and consternation) around the impact it will have on education.

Being tech-curious, and a writer/English teacher turned coder and developer, language models are an inherently interesting concept to me. However, one question I had was as to their reliability. It’s great at composing sonnets or offering up information and insights, but can it write to a purpose? Will it be able to:

- create something accurate and purpose fitting

- not sound overly formulaic

- not just alter the prompt with synonyms

To put it simply I was wondering, what does this technology look like in context?

Finding a starting point

To fairly evaluate the technology and how it would look in a school context is difficult. Schools are worlds unto themselves, filled with growing young minds and curricula that survey all of human knowledge. For this initial experiment, I wanted something with fixed, quantitative data, that is to say, data that can be easily measured and has an objective meaning. Naturally the easiest place to find this was within the gradebooks.

The gradebook would offer the opportunity to:

- Evaluate if the text generated would be contextually valid (for different classes and grades)

- Check differences in language use for struggling, passing and excelling students

- Understand the technologies ability to adapt to similar but different situations (a Grade 11 visual arts class compared to a Biology class)

Understanding what I was building

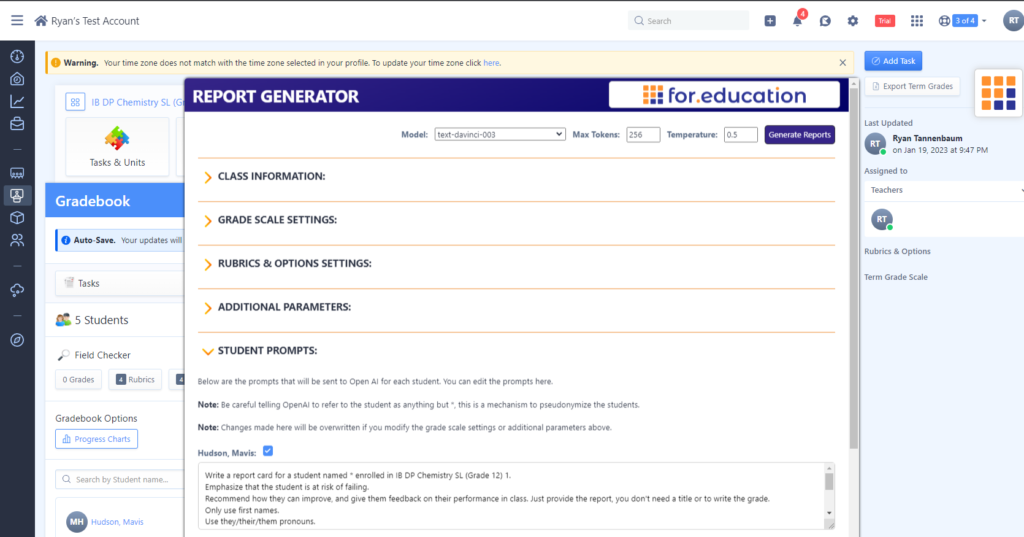

After the initial excitement of getting reports generated automatically, I quickly realized that I wasn’t building a report writing solution, OpenAI had done this. No actually, I was developing a prompt building solution. I was looking to parse the information on the term reports page so that it could generate an adequate initial prompt that the teacher could then build off of.

Teachers put a lot of effort into assessment, and much of the information in a report card is already covered by the final grade and rubric assessments. By intelligently incorporating these into the prompt generated, teachers could be given a baseline that would hopefully generate an adequate prompt with no further input needed.

Making it personal

Ultimately though, reports are a personal comment offered by a teacher to a student based on the work completed. Not only should the reports be contextual by default, they should also be editable by any teacher. That way, if a teacher wants to address a specific point for a certain student, they can easily prompt the AI to do so.

This seemed to be the happiest balance. Teachers could either personalize the prompts, or generate a report comment and personalize it after it had been generated. This offers the freedom to contribute as much (or as little) as desired.

The anonymous procession of grades

My other main concern was about privacy and security. Report cards are formal assessments of student learning, they are important documents in a child’s life, and the information therein is confidential. If we were to send this information across the planet to OpenAI’s servers, who are we giving access? What are we sharing, and are we free to do so?

Evaluating the prompts that were being generated for my fake students, I noticed that there were three main pieces of identifiable information being sent:

- Names

- Grades and rubric scores

- Gender

I settled on the following solutions for each:

Names – I will tell OpenAI to generate a response for a student named “*”. I could then replace this once the text was sent back with the student’s actual name

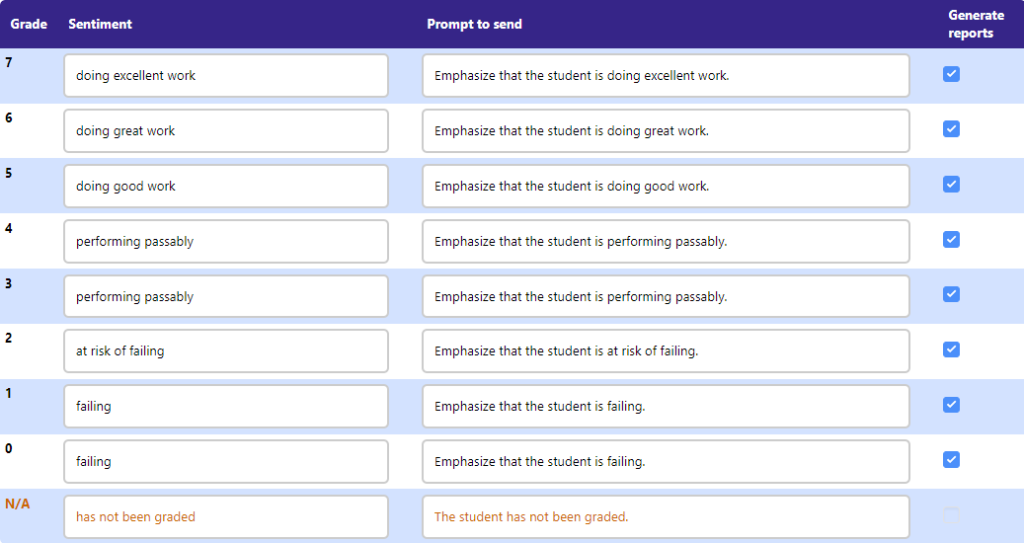

Grades – I decided to not send grades across to OpenAI, but rather a generalized sentiment that summed up the student’s attainment. I felt this would convey the feeling associated with the grade, without actually offering the grade to OpenAI.

Gender – I told OpenAI to refer to each student as they/their/them by default, but the teacher could easily modify this in the prompt if they so chose. Long term, I think a solution like the one above where it sends a special character for each of the pronouns which can be adjusted once the prompt has returned is the best solution, but unfortunately the extension currently cannot access gender data from any data source.

The final result is the following:

Write a report card for a student named * enrolled in IB DP Chemistry SL (Grade 12) 1.

Emphasize that the student is at risk of failing.

Recommend how they can improve, and give them feedback on their performance in class. Just provide the report, you don’t need a title or to write the grade.

Only use first names.

Use they/their/them pronouns.You must talk about each of the following:

- The student has a Predicted DP Grade score of 4.

- The student has a Targeted Grade score of 3.

- The student has a Final DP Grade score of 4.

Do not write “the student” or “student”. Always refer to the student as *.

Come What May

I’ve been running a closed beta for this for the past week and overall feedback has been curious and positive. However, a lot of people also have reservations about this – they are worried about what the technology means, and how it alters an untold number of dynamics in the school. In fact, I wrote a separate blog post offering some guidance for evaluating whether to adopt new technologies into your organization.

That said, I didn’t start this project because I wanted to simplify a laborious process for teachers (or at least that wasn’t the primary driver). Rather, my goal was to move out of speculation about what the future might look like, and instead put the technology in context. That way, I can evaluate what it is in reality, and not in postulations and assumptions.

For administrators hesitant to introduce this into their schools, frankly, that ship has already sailed. At this point our responsibility as administrators and teachers is to evaluate what this technology does produce in context, and assess if it has a place in our school or not. Nuanced arguments are going to bear far more weight than those buttressed by fear.

Giving it a shot

If you’d like to try out the extension in its current form, sign up for our closed beta. Your feedback and conversation will help us to further survey the strange plains opening up before us. If you’d like to start a conversation, feel free to connect with me via email or LinkedIn.